Key Points

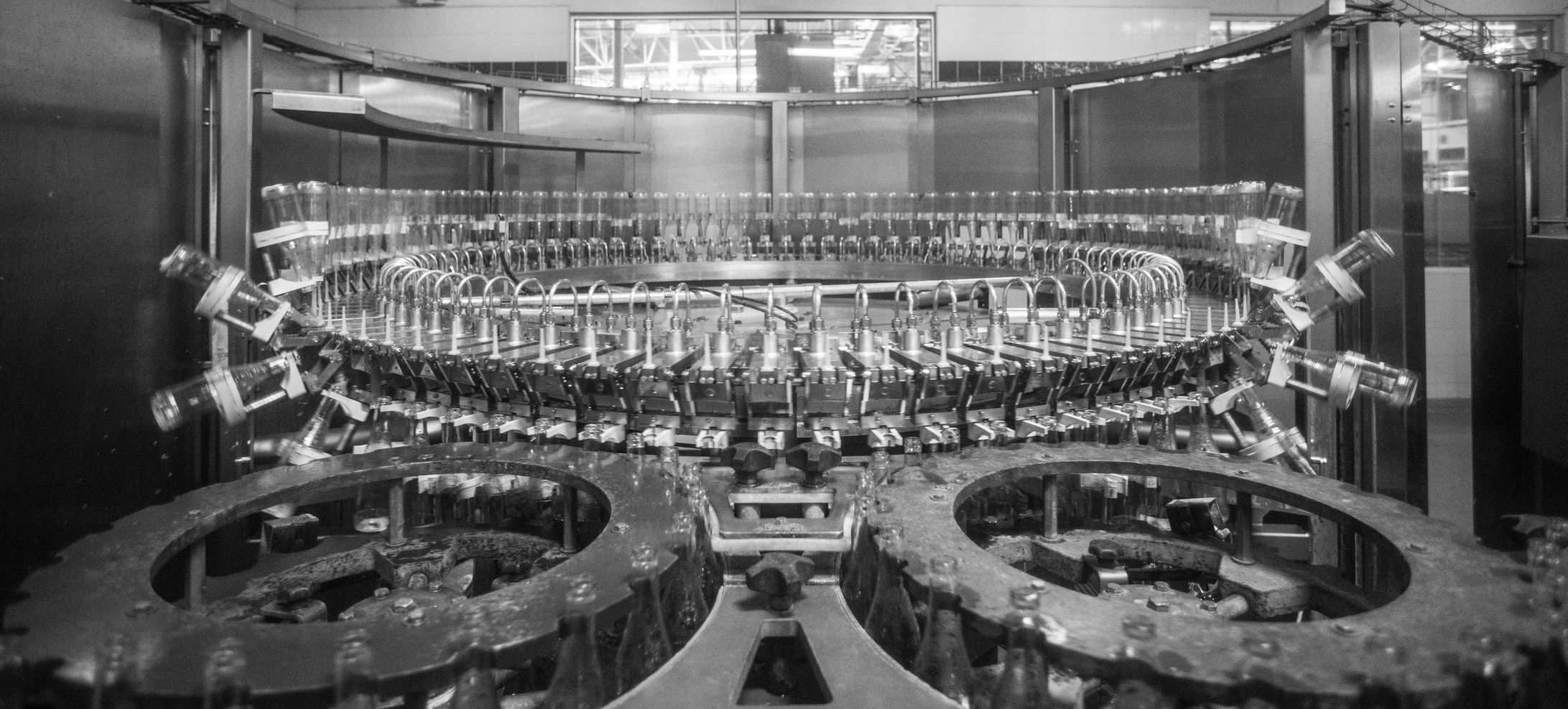

- A wider adoption of video/image data across industry could enable the development of new solutions to a large number of costly industrial problems such as manual inspection and defect detection

- Live processing of streamed video data can enable more effective reactive maintenance strategies for systems dependent on 1000’s of assets spread geographically such as rail infrastructure

- The computational difficulties can be overcome when combining technologies such as tiny ML and micro-controllers

Expanding Condition Monitoring with Video Data

The potential benefits of instrumenting with video data are extensive, with applications to improve reactive, predictive, and preventive maintenance strategies. Video inspection can replace time consuming manual inspection tasks, improving safety through better social distancing and improved workforce management and by eliminating access restrictions.

The single multidimensional data source can contain far more information than a full suite of instrumentation and sensors. A thermal camera installed in view of a key system or piece of kit could be used to detect a range of failures such as thermal abnormalities or cracking in more explicit detail than standard condition monitoring approaches relying on timeseries data. The data format from standard cameras and microphones can be used, in theory, to quantify any process that manifests itself visually or audibly, and using a wider range of imaging technology such as the prior mentioned thermal cameras or LiDAR can widen the applicability.

APPLICATIONS OF AUTOMATIC VISUAL INSPECTION ACROSS INDUSTRY INCLUDE:

- Detection of manufacturing failures or defect detection on production lines

- Debris or interference detection

- Spatial temperature trend analysis

- Monitoring of surface defects such as cracking key structural assets

Deploying machine learning or AI techniques in these fields requires solving some complicated data handling problems due to its dense nature. If online predictions are required, live data streams need to be continuously running with well trained and sophisticated deep learning models actively processing the incoming feed. These large models are often computationally expensive with a trade-off between speed and accuracy to navigate. These prediction outputs need to be communicated efficiently to system owners and in sufficient time to direct effective action. The time delay between event occurrence and notification can be crucial for a visual inspection system to have a real impact in industry. For example the delay between the detection of a manufacturing defect and communication to plant owners could result in significant losses if rapid adjustments are required.

Object Detection Application in Rail

At Ada Mode, we have applied object detection using the YOLO (you only look once) modelling framework developed by the amazing team, past and present, at darknet. YOLO, an object detection architecture, is the industry leader for a balanced performance of speed and accuracy in video processing tasks for object detection.

For the rail market, analysis of overhead cable equipment condition is a particular area of interest. Failure of such equipment will cause the cable to be dropped, resulting in increased strain across the cable and reduced conduction efficiency. Managing >10,000 miles of track, some of which being electrified with overhead cables, is complex due to the sheer number and variation in geographical location of the assets. The current approach relies heavily on costly manual inspections or unreliable incident reports, resulting in significant delays between failure, detection, and maintenance. Hence increasing the risk of subsequent failure events and customer delays.

By automatically identifying the overhead cable infrastructure in video feeds recorded from the train, it would be possible to implement a more efficient reactive maintenance strategy by detecting faults automatically and early. This would allow for the prevention of further degradation to surrounding assets and coordination of maintenance efforts without reliance on incident reports or scheduled inspections

Using data scraped from this video, we have trained a custom object detection algorithm looking for specific overhead cable equipment.

Video Description: Testing performance of YOLOv4 pylon detection model on 4k video input. Trained on 1600 labelled images over 6000 batches with recommended darknet configuration the model achieved a max mAP of 97%.

With access to images of faulty overhead equipment, the model could be further trained to segregate healthy operating assets from those that have failed. Expanding on this, the timestep of the video feed, speed or location data could be used to obtain the id of the exact asset that has been detected as faulty.

By building up a robust set of failure images and linking to location data a fully automated reactive maintenance strategy for overhead assets could be implemented with significant financial savings .

Deployment Considerations

Implementing the defined reactive maintenance strategy is computationally expensive. The option of moving the data to the cloud for processing will require fast internet speeds, which cannot be guaranteed on a moving train resulting in outages and potentially missed failures. It is possible that tiny-ML/tiny-yolo methods deployed on micro controllers such as raspberry Pi could be placed at each train and process the video feed locally. This method could reach processing speeds of ~4fps on low res video, which would capture a frame every 13.8m when travelling at 200km/h. Deployment on 4k video was only implemented here to produce an HD video output, the target assets can be detected after significant compression of the video (480p) which can increase speeds significantly. The magnitude of compression suitable is dependent on the selected model input structure.

As is standard in computer vision tasks, the performance of the object detection model is expected to be sensitive to weather conditions, lighting, and certain routes. In this demo the model was trained and tested on a single route. The route traverse ~118km of Montenegrin countryside and the model has been exposed to a variety of environmental conditions in training. However, the model’s performance is expected to fluctuate under certain environmental conditions. For example, the model was not trained to handle tunnels and can struggle in mountainous or densely forested regions where the pylons blend in with the environment.

It would be best practice to train the detection model across a wide range of routes, weather conditions and times of day. The latest additions included with yolo v4 introduced a wider set of data augmentation tricks to help with these standard computer vision problems. If the model still struggles in certain situations, then these areas can be identified and subject to a more focused manual inspection.

There are also expected to be design differences between the overhead cable infrastructure used in Montenegro and those used in the UK. By replicating the rapid data collection and modelling used to produce this case study the process can be adapted to new more relevant equipment designs or target components.

A further complication is caused by train travel speeds. The faster a train travels, the higher rate of video stream required to obtain suitable image fidelity to detect assets accurately due to motion blur. This would require trains to be instrumented with higher spec imaging technology.

Final Thoughts

Ada Mode have demonstrated how to effectively apply machine learning techniques to video data for industrial improvement with an example in the travel sector. The potential applications go far beyond those discussed here, stay tuned for how we can make use of audio data for fault detection and how we use image processing to detect surface level defects.